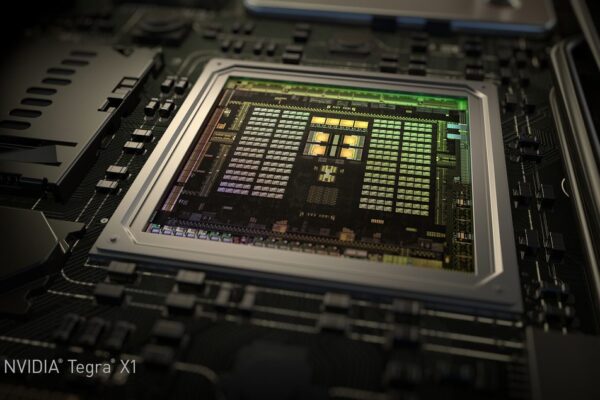

Nvidia computer as processing hub for self-driving cars

The Drive PX computer, initially announced in January at CES, will be available in May for automotive tier ones, research institutes and developers of Advanced Driver Assistance Systems (ADAS). Accommodating two Tegra X1 microprocessors and capable of processing the video signals of up to twelve cameras simultaneously, enables ADAS developers to implement multiple driver assistance functions at the same time, including surround view, pedestrian detection, mirrorless driving, cross traffic monitoring and driver status monitoring.

According to Nvidia CEO Jen-Hsun Huang, the Drive PX will be able to take the feature set of driver assistance systems to the next level, beyond basic classification and driver alerting tasks. Its sheer computing power and memory capacity will enable it to run innovative and more powerful algorithms for advanced tasks associated with autonomous driving. For example, these algorithms will be able to differentiate a vehicle parked at the curb from one that is about to pull into traffic. "The car is not just sensing, but interpreting what is taking place around it – this is an essential capability for auto-piloted driving", Huang said.

For more perfect surround view functionality, the system supports advanced structure-from-motion (SFM) and advanced stitching or better image rendering, avoiding ghosting and warping effects that result if multiple camera images are stitched together.

The development board itself already has some impressing features: It offers a throughput of 1.3 gigapixel per second. Its two microprocessors access 10 GByte of DRAM. Besides image processing and deep learning algorithms, it handles over-the-air updates, another technology that will be indispensible for future vehicle generations.

Related news:

Piloted driving takes centre stage at Audi’s CES presentation

Audi TT – a step towards the software-defined car

More information:

Nvidia blogpost

If you enjoyed this article, you will like the following ones: don't miss them by subscribing to :

eeNews on Google News

If you enjoyed this article, you will like the following ones: don't miss them by subscribing to :

eeNews on Google News