Deep learning method improves environment perception of self-driving cars

Abhinav Valada, holder of the junior professorship for Robot Learning at the Institute of Computer Science at the University of Freiburg, is investigating this research question. He and his team have now developed the innovative “EfficientPS” model, which uses artificial intelligence (AI) to recognise visual scenes more quickly and effectively.

The task of understanding scenes is usually solved with Deep Learning (DL), a technique of machine learning. This involves artificial neural networks inspired by the human brain learning from large amounts of data. Public benchmarks play an important role in measuring the progress of these techniques. “For many years, research teams from companies like Google and Uber have been competing for the top spot in these benchmarks,” says Rohit Mohan from Valada’s team. However, the new method developed by the Freiburg computer scientists has now reached first place in Cityscapes, the probably most influential public benchmark for methods for understanding scenes in autonomous driving. EfficientPS is also listed in other benchmark data sets such as KITTI, Mapillary Vistas and IDD.

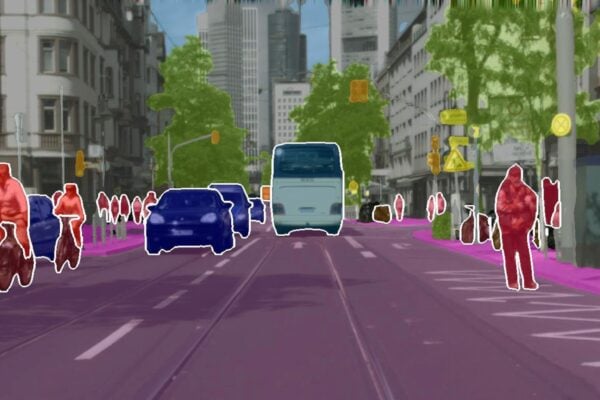

On the project’s website Valada shows examples of how the team has trained different AI models on different datasets. The results are superimposed on the respective image captured with the camera, with the colours showing which object class the model assigns the respective pixel to. For example, cars are marked in blue, people in red, trees in green and buildings in grey. In addition, the AI model also draws a frame around each object recognized as a separate entity. The Freiburg researchers have succeeded in training the model to transfer the information learned from urban scenes in one city to other urban backgrounds – in this case from Stuttgart to New York. Although the AI model did not know what a city in the USA might look like, it was able to accurately identify scenes from New York City.

Most of the previous methods that address this problem require large amounts of data and are too computationally intensive for use in real-world applications such as robotics, because these runtime environments are highly resource constrained. “Our EfficientPS not only achieves high output quality, it is also the most computationally efficient and fastest method,” says Valada.

More information: https://panoptic.cs.uni-freiburg.de/

Related articles:

Artifical Intelligence Roadmap: A human-centric approach to AI in aviation

Brainstorm: Report on Artificial Intelligence

Startup improves AI training procedures for autonomous cars

If you enjoyed this article, you will like the following ones: don't miss them by subscribing to :

eeNews on Google News

If you enjoyed this article, you will like the following ones: don't miss them by subscribing to :

eeNews on Google News